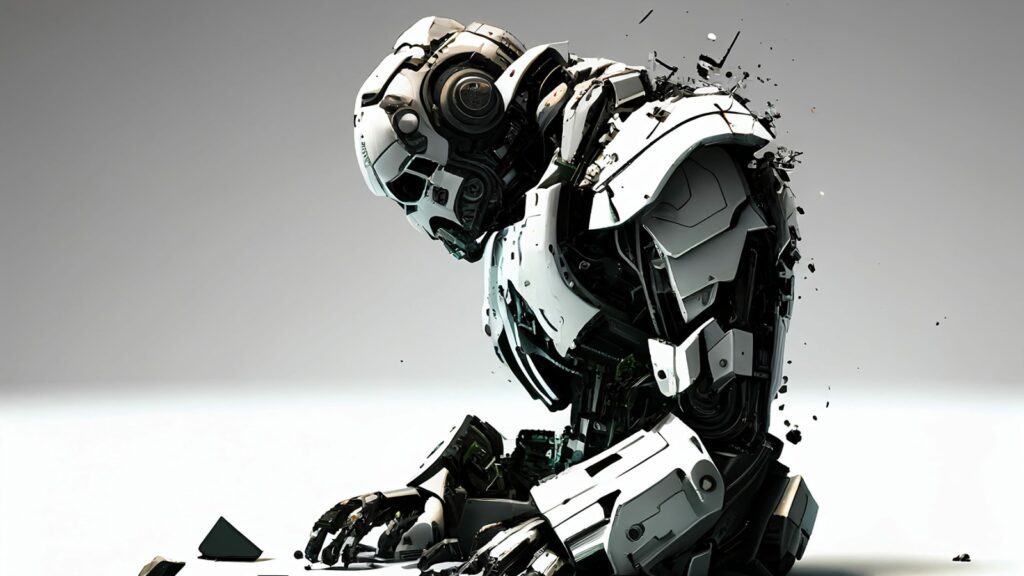

Brands Are Beginning to Turn Against AI

To hear it from Apple or Elon Musk, AI is our inevitable future, one that will radically reshape life as we know it whether we like it or not. In the calculus of Silicon Valley, what matters is getting there first and carving out the territory so that everyone will be reliant on your tools for years to come. When speaking to Congress, at least, someone like OpenAI CEO Sam Altman will mention the dangers of artificial intelligence and need for strong regulatory oversight, but in the meantime, it’s full steam ahead.

Plenty of corporate and individual actors are buying into the hype, often with disastrous results. Numerous media outlets have been caught publishing AI-generated garbage under fictitious names; Google has buried its search results with bogus “AI Overview” content; earlier this year, parents were outraged to learn that a Willy Wonka-themed pop-up family event in Scotland had been marketed to them with AI images bearing no resemblance to the grim warehouse setting they entered. Amid all this discontent, it would seem there is a new marketing opportunity to be seized: become part of an anti-AI, pro-human counteraction.

The beauty brand Dove, owned by the multinational conglomerate Unilever, made headlines in April by pledging “to never use AI-generated content to represent real women in its advertisements,” per a company statement. Dove explained the choice as one that aligned with its successful and ongoing “Real Beauty” campaign, first launched in 2004, which saw professional models replaced by “regular” women in advertisements that focused more on the consumer than the products. “Pledging to never use AI in our communications is just one step,” said Dove’s chief marketing officer, Alessandro Manfredi, in the press release. “We will not stop until beauty is a source of happiness, not anxiety, for every woman and girl.”

But if Dove took a hard stance against AI in order to protect a specific brand value around body image, other brands and ad agencies are worried about the broader reputational risk that comes with reliance on automated, generative content that bypasses human scrutiny. As Ad Age and other industry publications have reported, contracts between companies and their marketing firms are now more likely to include strong restrictions on how AI is used and who can sign off on it. These provisions don’t just help to prevent low-quality, AI-spawned images or copy from embarrassing these clients on the public stage — they can also curtail reliance on artificial intelligence in internal operations.

Meanwhile, creative social platforms are staking out zones meant to remain AI-free, and getting good customer feedback as a result. Cara, a new artist portfolio site, is still in beta testing but has sparked significant buzz among visual artists because of its proudly anti-AI ethos. “With the widespread use of generative AI, we decided to build a place that filters out generative AI images so that people who want to find authentic creatives and artwork can do so easily,” the app’s site declares. Cara also aims to protect its users from the scraping of user data to train AI models, a condition automatically imposed on anyone uploading their work to the Meta platforms Instagram and Facebook.

“Cara’s mission began as a protest against unethical practices by AI companies scraping the internet for their generative AI models without consent or respect for people’s rights or privacy,” a company representative tells Rolling Stone. “This core tenet of being against such unethical practices and the lack of legislation protecting artists and individuals is what fueled our decision to refuse to host AI-generated images.” They add that because AI tooling is likely to become more common across creative industries, they “want to act and see legislation passed that will protect artists and our intellectual property from the current practices.”

Older sites in this space are looking to add similar safeguards. PosterSpy, another portfolio site that helps poster artists network and secure paid commissions, has been a vibrant community since 2013, and founder Jack Woodhams wants it to keep it a haven for human talent. “I have a pretty strict no-AI policy,” he tells Rolling Stone. “The website exists to champion artists, and although users of generative AI consider themselves artists, that couldn’t be further from the truth. I’ve worked with real artists all over the world, from up-and-coming talent to household names, and comparing the blood, sweat, and tears these artists put into their work to a prompt in an AI generator is insulting,” Woodhams says, to “the real artists out there who have trained for years to be as skilled as they are today.”

Some of the pressure to set these standards comes from the customers themselves. Game publisher Wizards of the Coast, for example, has repeatedly faced outrage from fans over the use of AI in products for its Dungeons and Dragons and Magic: The Gathering franchises, despite the company’s various pledges to keep AI-generated images and writing out of the franchises and commit to the “innovation, ingenuity, and hard work of talented people.” When the company recently posted a job listing for a Principal AI Engineer, consumers again sounded the alarm, forcing Wizards of the Coast to clarify that it is experimenting with AI in video game development, not its tabletop games. The back-and-forth demonstrates the perils for brands that try to sidestep the debates over this technology.

It’s also a measure of the vigilance needed to forestall a complete AI takeover. On Reddit, which does not have a blanket policy against generative AI, it’s up to community moderators to prohibit or remove such material as they see fit. The company has so far only argued that anyone looking to train AI models on their public data must agree to a formal business deal with them, with CEO Steve Huffman warning that he may report those who don’t to the Federal Trade Commission. The publishing platform Medium has been slightly more aggressive. “We block OpenAI because they’ve given us a protocol for blocking them, and we would block basically everyone if we had a way to do that,” CEO Tony Stubblebine tells Rolling Stone.

At the same time, Stubblebine says, Medium relies on curators to stem a tide of “bullshit” he sees washing through the internet in the age of nascent AI, preventing any of it from being recommended to users. “There is no good tool for spotting AI-generated content right now,” he says, “but humans spot it immediately.” At this point, not even the filtering of automated content can be fully automated. “We used to delete a million spam posts a month,” Stubblebine notes. “Now we delete 10 million.” For him, it’s a way to ensure that real writers maintain fair exposure and subscribers can discover writing that speaks to them. “There’s this huge gap between what someone will click on and what someone will be happy to have paid to read,” Stubblebine says, and those who provide the latter may reap the rewards as the web grows cluttered with the former. Even Google’s YouTube has promised to add warning labels on videos that have been “altered” or “synthetically created” with AI tools.

It’s hard to guess whether institutional resistance to AI will continue to gather momentum, though between a pattern of high-profile AI failures and a rising distrust in the technology, companies that effectively oppose it in one form or another seem poised to weather the hype cycle (not to mention the fallout of the burst bubble some observers predict). Then again, should AI go on to dominate the culture, they could be left to serve a smaller demographic that insists on AI-free products and experiences. As in all strategic business decisions, it’s too bad there’s no bot that predicts the future.